What Are Data Center Migration Tools?

Data center migration tools enable the process of relocating data, applications, and workload from one data center environment to another. These tools provide a structured approach, ensuring that the migration process is efficient, minimally disruptive, and secure.

As organizations evolve, they may need to move to new physical locations, adopt cloud solutions, or upgrade their current infrastructure, prompting the need for migration solutions. These tools help IT departments manage these transitions smoothly, reducing the risk of data loss or downtime.

The benefits of using migration tools include simplified processes, automation capabilities, and improved security measures. By leveraging these tools, organizations can mitigate risks associated with migration, such as data breaches or operational disruptions. They also provide valuable insights and analytics to optimize future migrations.

Editor’s note: Added recent market information and updated information for data center migration tools to reflect features and capabilities in 2026.

Why Is Data Center Migration Important?

According to recent research, data center migration is important because infrastructure must evolve with business needs. Aging hardware, rising costs, performance limits, and security gaps often make existing environments unsustainable. Migration allows organizations to modernize systems while maintaining business continuity.

Companies also migrate to support cloud adoption, regulatory compliance, mergers, or geographic expansion. Without a structured migration approach, organizations risk downtime, data corruption, and operational disruption.

A well-planned migration improves resilience, scalability, and long-term cost efficiency.

- Improves performance and scalability: Modern infrastructure supports higher workloads, faster processing, and elastic scaling. This ensures systems can handle growth and changing demand.

- Reduces operational costs: Moving from legacy systems to optimized environments lowers maintenance, power, and hardware costs. Cloud migration can also shift spending from capital expenses to operational expenses.

- Enhances security and compliance: New environments often provide stronger security controls, encryption, and monitoring. Migration also helps meet updated regulatory requirements.

- Increases reliability and availability: Modern data centers offer better redundancy and disaster recovery options. This reduces downtime and improves service continuity.

- Supports digital transformation: Migration enables adoption of cloud-native services, automation, and modern application architectures. This helps organizations innovate faster.

Data Center Migration Market Trends

The data center migration market is expanding rapidly. It is projected to grow from $12.26 billion to $26.77 billion by 2029, with a compound annual growth rate above 16%. Growth is driven by stricter regulatory requirements, stronger data protection laws, mergers and acquisitions, and the need for centralized access in remote work environments.

Cloud adoption is a major factor behind this expansion. Most enterprises now rely on cloud services, and global cloud infrastructure spending is expected to reach $912 billion. Organizations are moving workloads to cloud platforms to improve scalability, strengthen business continuity, and reduce infrastructure costs. Migration tools play a central role in enabling these transitions while optimizing performance and expenses.

Technology advancements are also shaping the market. Agentic AI-driven orchestration is emerging to automate and coordinate migration tasks. Infrastructure as code is being used to create standardized deployment blueprints and structured landing zones. Vendors such as Amazon Web Services have introduced AI-based services to simplify modernization and reduce the complexity of large-scale migrations.

Regionally, North America leads the market, while Asia-Pacific is expected to grow the fastest through 2029. The Middle East and Africa are also seeing increased investment in cloud and AI infrastructure. Countries such as Saudi Arabia, the UAE, and Qatar are building large-scale data centers and AI hubs, attracting global technology vendors and increasing regional demand for migration services.

Key Features of Data Center Migration Tools

Discovery and Assessment

Discovery and assessment are initial steps in data center migration, focusing on identifying existing assets and assessing their readiness for migration. These stages involve cataloging hardware, software, and network configurations, identifying dependencies, and evaluating workloads.

Assessment tools evaluate this inventory against the target environment requirements, ensuring compatibility and identifying potential issues before they arise. By conducting rigorous assessments, organizations can prioritize workloads based on complexity, sensitivity, and interdependencies, ensuring a smooth transition to new platforms or environments.

Migration Planning and Orchestration

Migration planning involves setting goals, establishing timelines, and assigning roles to team members. Orchestration tools help coordinate complex tasks, provide real-time updates, and automate repetitive processes. The goal is to ensure that all aspects of the migration are synchronized, minimizing the chance of errors and reducing downtime.

A well-orchestrated migration leverages automation to handle complex tasks, reducing the potential for human error. Automation also enables real-time adjustments to address unforeseen challenges.

Data and Application Migration

Data and application migration focus on securely transferring data assets and applications to the new environment. These tools ensure data integrity, security, and consistency during transfer. Migration processes usually include data mapping, transformation, and validation to ensure compatibility with the target system. Automation also helps speed up data transfer while maintaining accuracy and security standards.

Effective data and application migration minimizes risks such as data corruption or application downtime. With pre-defined workflows and automated checks, these tools help maintain business continuity. Comprehensive testing before final cutover ensures that systems operate as expected in the new environment.

Learn more in our detailed guide to application migration strategy

Network and Infrastructure Migration

Network and infrastructure migration involves transitioning network components and physical or cloud-based infrastructures. These tools focus on reconfiguring network paths, ensuring compatibility with new systems, and maintaining connectivity. Infrastructure migration may involve moving servers, storage devices, and other hardware.

Tools used for network and infrastructure migration provide automation and monitoring capabilities. Automation ensures configuration accuracy, while continuous monitoring identifies potential issues early. By focusing on maintaining service levels and connectivity, these tools help organizations transition infrastructure components with minimal impact on users.

Security and Compliance

Migration tools have built-in security measures, such as encryption and access controls, to protect sensitive information. Compliance checks ensure that the migration aligns with industry standards and regulatory requirements, avoiding potential legal or operational repercussions.

Security tools provide detailed audit trails and log management capabilities, allowing organizations to monitor activities during migration. Compliance features help ensure that any changes to systems or data structures are documented and meet regulatory obligations.

Notable Data Center Migration Tools

1. Faddom

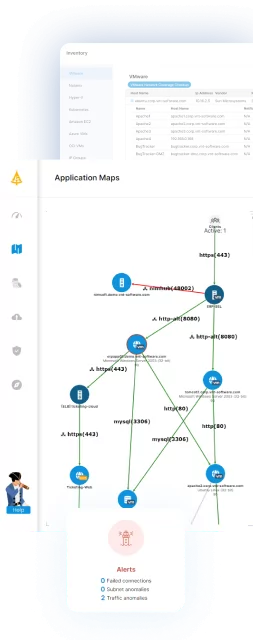

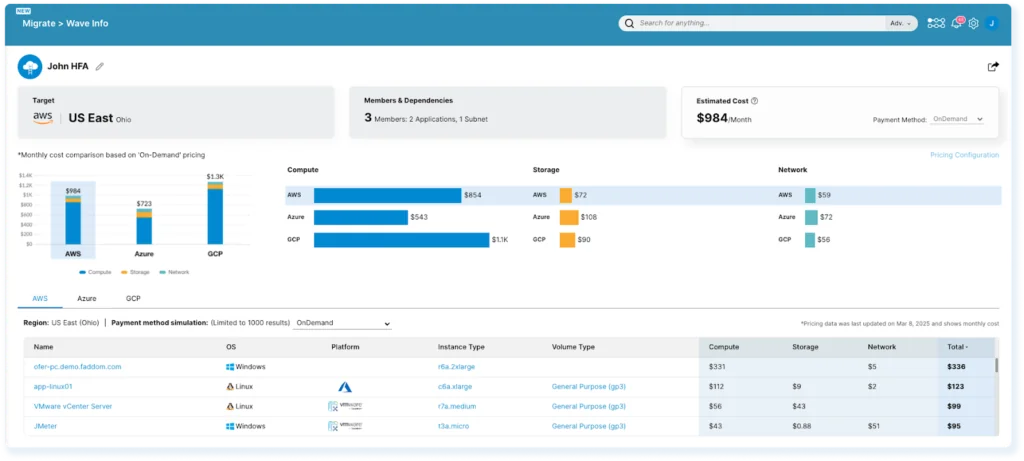

Faddom is an agentless application dependency mapping tool designed to simplify the planning phase of data center migrations. It provides real-time visibility into business applications, server dependencies, and network flows, allowing organizations to build accurate migration strategies with minimal risk. Its visual interface supports wave-based planning and rightsizing decisions for cost-effective, phased transitions across hybrid environments.

Key features include:

- Real-time application dependency mapping: Visualizes all dependencies between servers, applications, and network components without deploying agents.

- Agentless and credential-free deployment: Installs in under 60 minutes without impacting system performance or security.

- Wave-based migration planning: Groups and prioritizes workloads based on actual interdependencies to minimize disruption during phased transitions.

- Rightsizing recommendations: Identifies underutilized resources and redundant infrastructure to optimize future-state environments.

- Hybrid infrastructure visibility: Provides continuous mapping across both on-premises and cloud environments to support complex IT landscapes.

Discover more about Data Center Migration with Faddom

Source: Faddom

2. Fivetran

Fivetran is a managed data movement platform to automate the transfer of data from a range of sources to data warehouses, data lakes, and other destinations. It supports large-scale data replication and transformation workflows, enabling organizations to centralize data for analytics, AI, and cloud migration initiatives.

Key features include:

- Extensive connector library: Supports more than 700 connectors across SaaS applications, databases, ERPs, and file systems for automated data ingestion.

- Automated data pipelines: Enables reliable and continuous data movement from source systems to warehouses and lakes with minimal manual configuration.

- Built-in transformations: Provides transformation capabilities and ready-to-use data models to prepare analytics-ready tables.

- Hybrid deployment support: Allows secure data movement across environments without compromising performance.

- Governed data movement: Includes governance controls to help manage, protect, and scale data pipelines.

- Security and compliance certifications: Supports standards including SOC 1, SOC 2, GDPR, HIPAA BAA, ISO 27001, PCI DSS Level 1, and HITRUST.

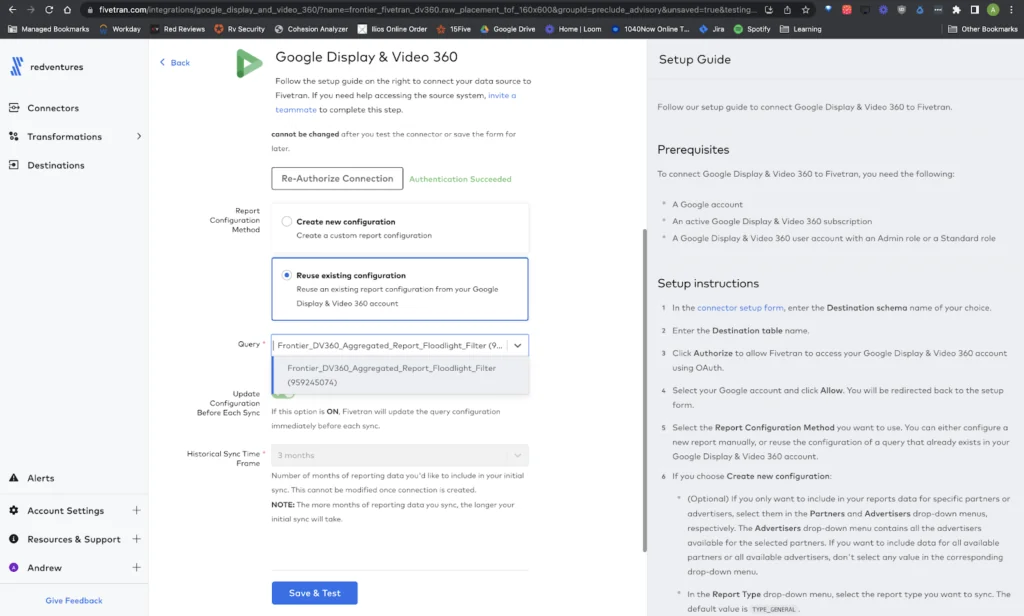

Source: Fivetran

3. Matillion

Matillion is a cloud-native data integration platform built for cloud data platforms such as Snowflake, Databricks, AWS, and Azure. It enables teams to design, build, and orchestrate data pipelines using low-code tools, SQL, Python, and dbt. The platform supports structured and unstructured data ingestion and leverages pushdown processing to execute transformations directly within the target cloud platform.

Key features include:

- Low-code pipeline design: Provides a visual designer to build and manage complex data pipelines through drag-and-drop components.

- Code-based development support: Enables SQL, Python, and dbt scripting with orchestration capabilities and native Git integration.

- Universal connectivity: Offers pre-built connectors and support for custom connectors to ingest structured and unstructured data.

- Pushdown architecture: Generates native SQL that runs inside cloud data platforms for performance and security.

- Agentic AI support: Includes AI-driven capabilities to assist with building and managing analytics and AI pipelines.

- Built-in security controls: Supports single sign-on (SSO), multi-factor authentication (MFA), and role-based access control (RBAC).

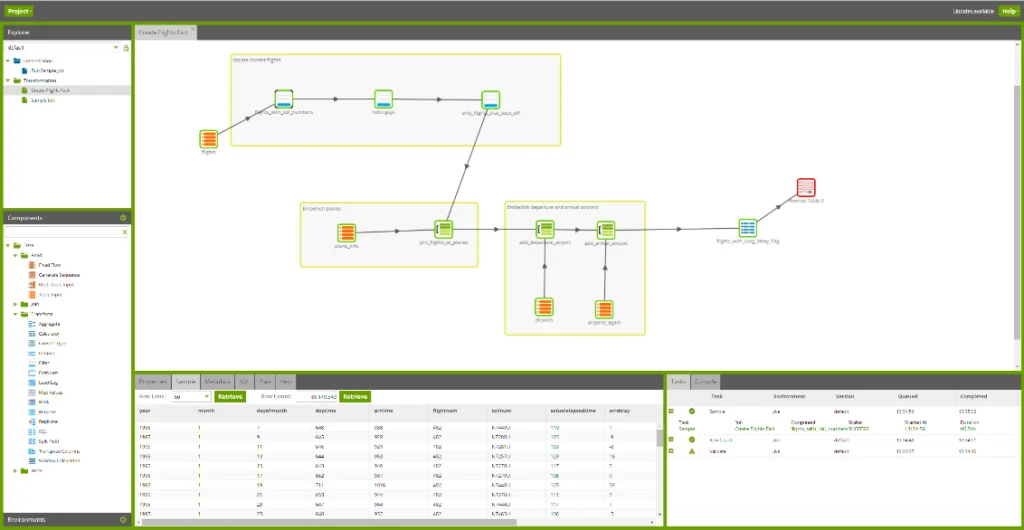

Source: Matillion

4. Stitch

Stitch is a cloud-based data integration service to move data from multiple sources into data warehouses, lakes, or lakehouses. It supports automated pipeline configuration and ongoing monitoring to reduce manual operational effort. The platform connects to a range of cloud and on-premises data sources and emphasizes secure and compliant data transfer.

Key features include:

- Broad source connectivity: Connects to more than 130 cloud and on-premises data sources for centralized ingestion.

- Automated pipeline management: Allows users to configure pipelines once and then monitor and control ongoing synchronization.

- Cloud data warehouse integration: Supports syncing data to warehouses, lakes, and lakehouses for analytics.

- Secure and compliant pipelines: Designed to support secure data movement with compliance considerations.

- Operational monitoring: Provides visibility into pipeline status to minimize operational impact.

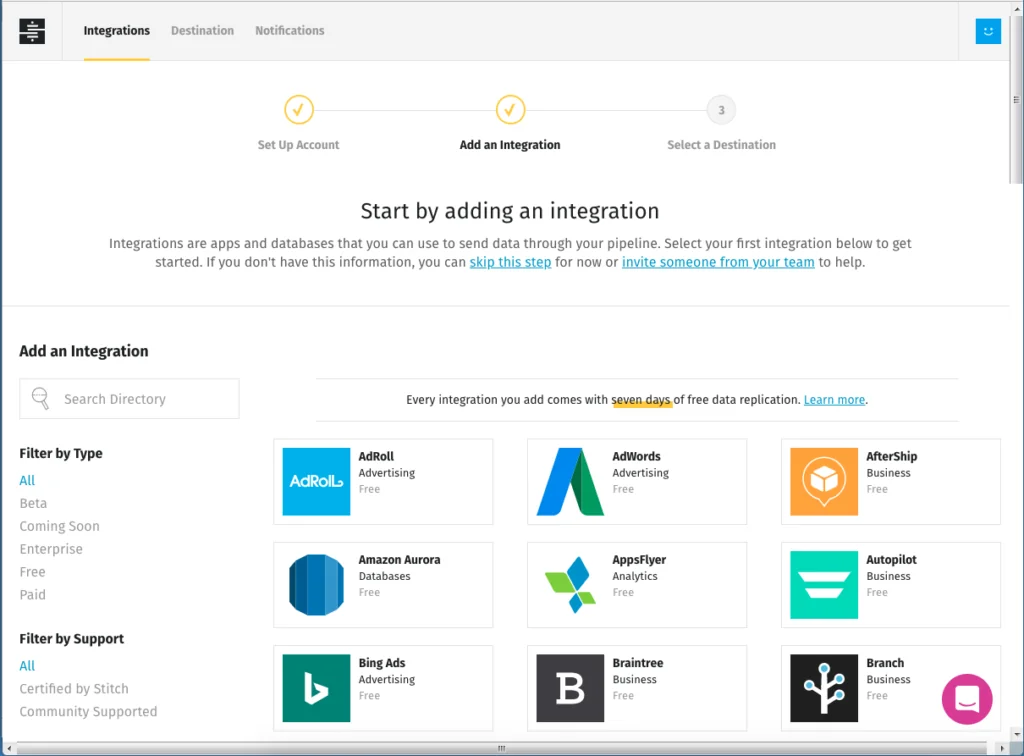

Source: Stitch

5. Hevo Data

Hevo Data is an end-to-end ELT platform that combines data extraction, loading, and transformation into a unified system. It supports ingestion from more than 150 pre-built connectors and enables near real-time database replication using change data capture (CDC). The platform provides operational visibility into pipelines and automates schema management to reduce manual intervention.

Key features include:

- Pre-built connectors: Offers over 150 connectors for databases, SaaS applications, and file systems.

- Change data capture replication: Supports near real-time database replication with minimal impact on production systems.

- Automated schema handling: Detects and manages schema changes automatically to maintain pipeline stability.

- Real-time pipeline monitoring: Provides detailed logs and visibility into system-level pipeline operations.

- Built-in transformations: Integrates dbt-based modeling for transformation within the same platform.

- Security and compliance support: Aligns with standards including GDPR, CCPA, SOC 2, HIPAA, and DORA.

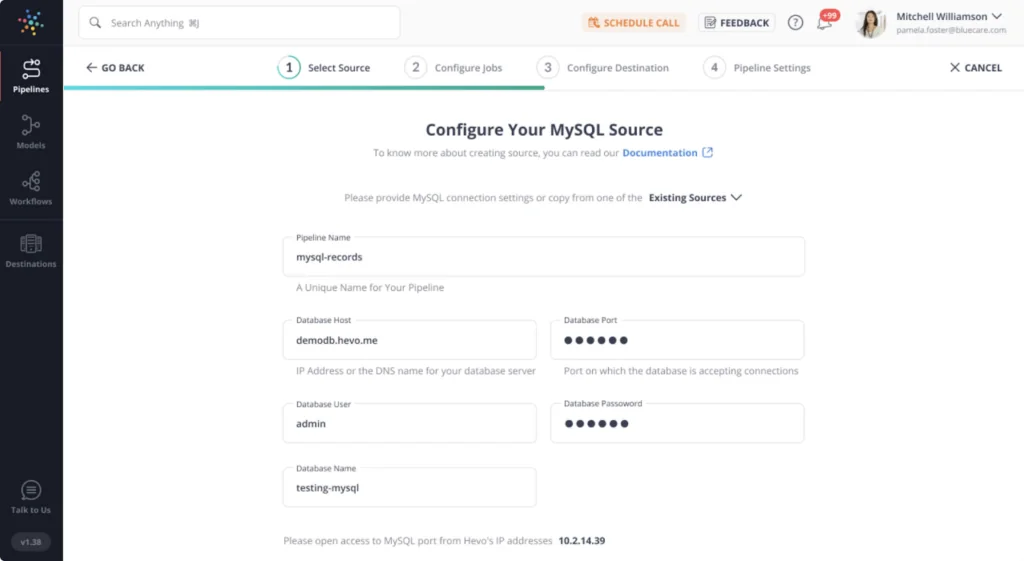

Source: Hevo Data

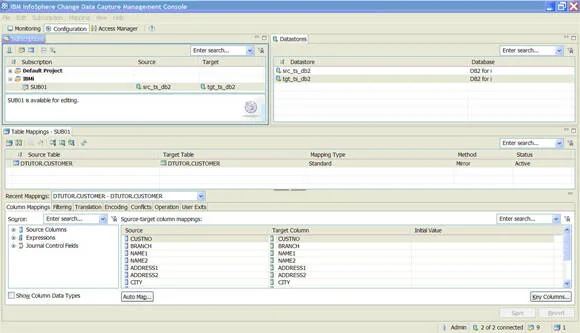

6. IBM InfoSphere

IBM InfoSphere Information Server is a data integration platform that supports data integration, quality management, and governance across on-premises and cloud environments. It includes massively parallel processing (MPP) capabilities for scalable data transformation and integration. The platform enables organizations to standardize data assets and improve information governance across heterogeneous systems.

Key features include:

- Extract, transform, load capabilities: Supports flexible ETL processes deployable on premises or in cloud environments.

- Massively parallel processing: Provides scalable MPP architecture for large-scale data transformations.

- Data governance framework: Helps discover IT assets and define standardized business terminology.

- Data quality management: Includes tools to cleanse, assess, analyze, and monitor data quality.

- Multi-environment deployment: Supports on-premises, private cloud, public cloud, and hybrid deployments.

Source: IBM

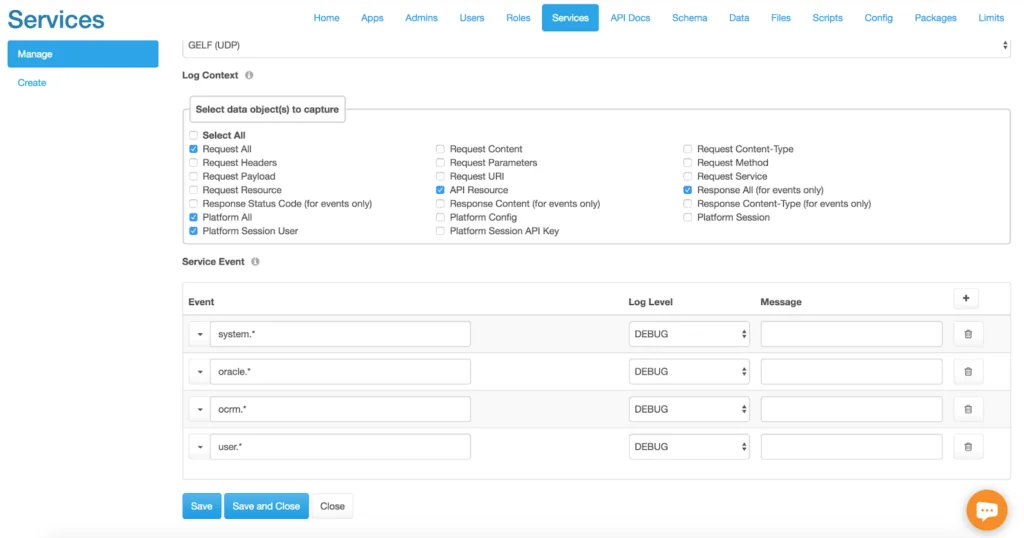

7. Integrate.io

Integrate.io is a low-code data pipeline platform to automate operational ETL, reverse ETL, and database replication workflows. It enables technical and non-technical users to build pipelines using visual tools and predefined transformations. The platform supports database replication with change data capture and provides structured onboarding and support services.

Key features include:

- Low-code pipeline builder: Allows users to design and manage pipelines without extensive coding.

- Database replication with CDC: Supports near real-time replication with sub-minute latency.

- Prebuilt connectors: Connects to more than 150 data sources and destinations, including bidirectional integrations.

- Built-in transformations: Provides over 220 table- and field-level transformations for data preparation.

- Pipeline orchestration and scheduling: Enables job scheduling, dependency management, and workflow automation.

- Security and compliance focus: Emphasizes adherence to data security laws and best practices with dedicated support.

Source: Integrate.io

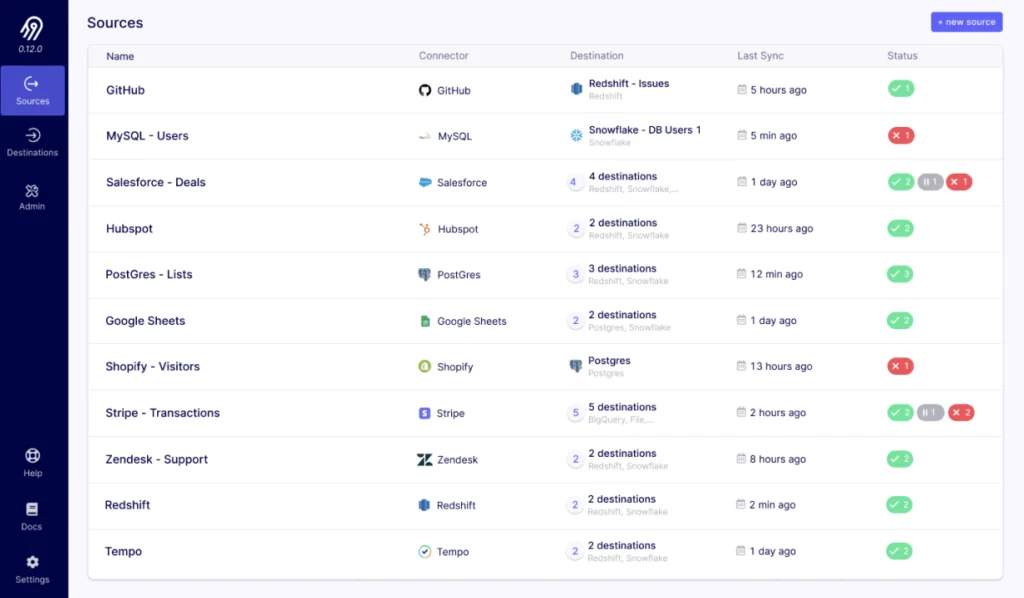

8. Airbyte

Airbyte is a data integration platform that provides an infrastructure layer for ELT pipelines and AI agent workflows. Built on an open-source foundation, it supports batch and change data capture (CDC) replication for analytics use cases. The platform enables governed access to data across systems and includes connectors for pipeline and AI-driven workflows.

Key features include:

- Open-source foundation: Provides an extensible integration layer built on open-source technology.

- Batch and CDC replication: Supports traditional ELT pipelines as well as change data capture for incremental updates.

- Unified integration layer: Delivers a single platform for data pipelines and AI agent data access.

- Connector-based architecture: Uses direct connectors to access and move data across systems.

- Governed data access: Enables controlled access, search, and action across distributed data systems.

Source: Airbyte

Conclusion

A well-planned data center migration requires the right tools to ensure efficiency, security, and minimal disruption. These tools help automate critical processes, maintain data integrity, and support compliance with industry standards. By leveraging migration solutions, organizations can reduce risks, simplify transitions, and optimize performance in their new environment.