Introducing OpenShift and VMware

VMware is a mature, hardware-centric virtualization leader for traditional VMs, focusing on granular control and stability, while OpenShift is a Kubernetes-native platform for managing containers that now also supports VMs (OpenShift Virtualization), offering a unified environment for hybrid cloud, DevOps, and microservices, emphasizing speed, automation, and consistency across container and VM workloads.

VMware:

- Focus: Traditional virtualization, running multiple VMs on physical hardware.

- Strengths: Mature platform, superior VM-level performance (especially with ESXi), established enterprise security, granular control (vMotion, DRS).

- Best for: Legacy applications, stable enterprise environments, deep control over VM lifecycle.

- Challenges: Can lead to silos, complexity, potential vendor lock-in, less aligned with containerized workflows.

OpenShift (virtualization):

- Focus: Container orchestration (Kubernetes) with integrated VM management (KubeVirt).

- Strengths: Unified platform for VMs & containers, DevOps/CI/CD integration, scalability, hybrid cloud support, automation.

- Best for: Cloud-native apps, microservices, hybrid/multi-cloud, modernizing legacy apps.

- Challenges: Steeper learning curve (Kubernetes), complexity in setup, can be costly.

Key differences and when to choose:

- Architecture: VMware virtualizes hardware (hypervisor), OpenShift orchestrates containers with built-in VM support.

- Workloads: VMware for traditional VMs; OpenShift for containers, modern apps, and mixed environments.

- Management: VMware uses vCenter for VMs; OpenShift uses Kubernetes (operators, APIs) for both.

- Modernization: OpenShift offers a pathway to run VMs and containers on a single, consistent platform, reducing silos.

Table of Contents

Toggle- Introducing OpenShift and VMware

- How VMware Works

- How OpenShift Works

- OpenShift Virtualization vs. VMware: Key Differences

- OpenShift Strengths and Challenges

- Tips from the Expert

- VMware Strength and Challenges

- VMware vs. OpenShift: Which to Choose

- Observability in Virtualized Environments with Faddom Dependency Mapping

How VMware Works

VMware works by virtualizing physical computing resources so multiple virtual machines (VMs) can run on a single physical server. Instead of installing an operating system directly on hardware, VMware inserts a virtualization layer called a hypervisor between the hardware and the operating systems. This layer abstracts the underlying physical resources—CPU, memory, storage, and networking—and presents them as virtual hardware to each VM.

Here’s a look at the main components of VMware’s architecture:

- Hypervisor: The hypervisor is responsible for creating and running virtual machines while ensuring that each VM operates in an isolated environment. Every VM has its own virtual CPU, memory allocation, storage, and network interfaces. Because of this isolation, multiple operating systems can run simultaneously on the same physical machine without interfering with each other.

- ESXi hypervisor: ESXi installs directly on the physical server and manages the execution of virtual machines. It allocates hardware resources to each VM and ensures that workloads share the server efficiently. By controlling resource allocation, the hypervisor allows several virtual systems to run concurrently while maintaining stability and predictable performance.

- vCenter Server: VMware environments are typically managed through vCenter Server, which provides centralized control over the virtualization infrastructure. Instead of managing individual servers independently, administrators can use vCenter to monitor and manage hosts, clusters, virtual machines, networking, and storage from a single interface.

- vSphere platform: Within a VMware deployment, vSphere combines ESXi hosts and vCenter management into a unified virtualization system. vSphere provides the tools needed to create, configure, and operate virtual machines across multiple servers. This architecture allows organizations to consolidate workloads onto fewer physical machines while maintaining control over resource allocation and system availability.

VMware also integrates with cloud environments. Because virtualization separates workloads from the underlying hardware, virtual machines can be moved or extended into public or hybrid cloud infrastructures while maintaining the same operational model. Organizations can run workloads on-premises while expanding capacity into cloud environments when necessary.

Security is built into the virtualization layer. VMware supports features such as network segmentation to isolate virtual machines and reduce the risk of unauthorized access between workloads. Encryption capabilities help protect data stored within the virtual infrastructure and during transmission across networks. These controls help maintain secure and compliant environments.

How OpenShift Works

OpenShift Container Platform is built on Kubernetes, an open source system for orchestrating containerized applications. OpenShift extends Kubernetes with integrated tools and services that manage the full lifecycle of container workloads, from building container images to deploying, scaling, and operating applications across clusters.

Here’s a look at the relevant components:

- Containers: Applications in OpenShift run inside containers, which are lightweight environments that package an application together with its dependencies. Containers are created from container images and executed on cluster nodes. A single node can run many containers, depending on the available CPU and memory resources.

- Pods: This is the smallest deployable unit in OpenShift. A pod contains one or more containers that run together on the same node and share resources such as networking and storage volumes. Pods represent a single application component. More complex applications are built by combining multiple pods that communicate with each other inside the cluster.

- Replica sets and replication controllers: These components maintain a desired number of pod instances. If a pod fails or is removed, the platform automatically creates new instances to maintain the required capacity. If too many pods are running, the system reduces the count to match the defined configuration.

- Deployment and DeploymentConfig: These objects are used to control application rollout and updates. They are definitions that specify the container image to run, how many replicas should exist, and how updates should be performed. The platform can perform rolling updates or other deployment strategies to maintain availability while new versions are released.

- Services: Used for networking between application components, a service defines a stable endpoint for a group of pods and provides internal load balancing. Because pods can be created and removed dynamically, services provide a consistent address that other components can use to communicate with an application.

- Routes: OpenShift uses routes to expose applications externally. A route assigns a publicly reachable hostname to a service, allowing external users or systems to access applications running inside the cluster. The routing layer connects external traffic to the appropriate internal service and pods.

- Nodes: OpenShift clusters are composed of nodes, which provide the compute resources used by containers. Each node runs a lightweight operating system based on Red Hat Enterprise Linux CoreOS. Nodes also run a container runtime such as CRI-O or Docker to execute container images. A Kubernetes agent called the kubelet runs on each node and is responsible for registering the node with the cluster and executing the workloads assigned to it.

- Operators: OpenShift uses these to automate the management of applications and platform components. Operators encode operational knowledge into software so that tasks such as installation, scaling, upgrades, backups, and recovery can be executed automatically within the Kubernetes environment.

The platform includes additional services for monitoring, logging, networking, and cluster management. OpenShift also provides an integrated image registry and a catalog of software components through OperatorHub. These features allow teams to build container images, store them in registries, and deploy them across the cluster.

OpenShift Virtualization vs. VMware: Key Differences

1. Focus

OpenShift Virtualization enables organizations to manage both containers and virtual machines within a unified Kubernetes platform. Its aim is modernization, bringing legacy workloads closer to cloud-native operations without abandoning existing VM-based applications. OpenShift offers a pathway for application modernization by allowing VMs to coexist with containers, all governed by Kubernetes-native interfaces, policies, and automation.

VMware focuses on traditional virtualization and infrastructure consolidation. While VMware supports cloud-native workloads through integrations and products like Tanzu, its foundational model is based around abstracting hardware to run VMs efficiently and securely. VMware’s main value proposition is delivering stability, reliability, and virtualization for data center resources.

2. Architecture

OpenShift Virtualization operates as an extension to OpenShift’s Kubernetes environment. It leverages KubeVirt to enable VM management as first-class Kubernetes resources. That means virtualization becomes a part of the same platform used for containers, allowing unified networking, storage, and lifecycle management through Kubernetes APIs. OpenShift’s architecture is cloud-agnostic, supporting hybrid deployment models and CI/CD workflows.

VMware’s architecture revolves around its hypervisor technology, especially ESXi, which sits directly on server hardware to manage multiple VMs per host. Its vCenter Server provides centralized management for clusters, networking, and storage, while additional products extend into areas like automation and software-defined data centers (SDDC). VMware’s architecture is mature and integrated with traditional enterprise IT environments.

3. Workloads

OpenShift Virtualization accommodates both containerized and VM-based workloads within the same platform. This makes it suitable for organizations migrating legacy applications toward cloud-native paradigms, as well as those running greenfield container deployments. Developers and operators can manage, schedule, and scale both workload types using Kubernetes tools, reducing silos between infrastructure and application teams.

VMware is intended predominantly for VM-based workloads, covering a wide range of operating systems and application types, including those that have not been containerized. Its ecosystem supports mission-critical and legacy enterprise applications, as well as large-scale business systems that require stability and backward compatibility. Although VMware now offers container support via integrations, its main strength remains in managing VMs.

4. Management

Management in OpenShift Virtualization is Kubernetes-native, using declarative configurations and YAML manifests for defining both VMs and container applications. Administrators and developers benefit from consistent APIs and interfaces, enabling automation through tools like Ansible or GitOps workflows. Built-in monitoring, logging, and policy enforcement are available within the same platform, reducing complexity and simplifying operations.

VMware offers centralized management through vCenter, providing tooling for VM lifecycle, performance monitoring, patch management, backups, and high availability. Its management stack is feature-rich, mature, and optimized for large-scale data centers, but it is distinct from Kubernetes-native workflows. Integration with automation tools like vRealize Orchestrator is possible, but cross-platform unification typically requires additional products or complexity.

5. Modernization

With OpenShift Virtualization, modernization involves moving legacy VMs into a cloud-native environment, enabling gradual refactoring or eventual conversion to containers. Organizations can adopt DevOps practices, CI/CD pipelines, and Kubernetes-native automation for both new and existing workloads. By bridging VMs and containers, OpenShift reduces friction in the modernization process.

VMware’s modernization approach involves integrating with other platforms or leveraging VMware Tanzu for Kubernetes support. However, the core VM infrastructure remains separate from container-native operations unless additional components are added. For enterprises with significant legacy investment, VMware provides stability and migration paths, but achieving full modernization may involve more steps and interoperability challenges.

6. Use Cases

OpenShift Virtualization is well suited for organizations seeking to consolidate containerized and legacy applications in a single platform, such as digital transformations, application modernization, or hybrid cloud strategies. It benefits DevOps teams looking to operate VM and container workloads side-by-side, enabling smoother migrations and experimentation with cloud-native architecture without discarding legacy investments.

VMware is typically chosen for data center virtualization, disaster recovery, mission-critical applications, and scenarios requiring support for legacy OS or monolithic applications. Its established presence in traditional enterprise IT makes VMware the default choice for organizations prioritizing VM density, high availability, and compatibility. Complex regulated industries often prefer VMware’s feature set and ecosystem integration.

Related content: Read our guide to OpenShift vs Kubernetes (coming soon)

OpenShift Strengths and Challenges

OpenShift delivers a platform for running both containers and virtual machines, making it a valuable tool for organizations undergoing digital transformation. Its Kubernetes-native approach helps unify operations, enforce security policies, and support modern DevOps workflows, all while enabling legacy workload support via OpenShift Virtualization.

Strengths

- Native Kubernetes integration with support for both VMs and containers

- Built-in CI/CD pipelines, monitoring, and logging tools

- Consistent API and declarative management across workload types

- Security defaults, including SELinux and role-based access control (RBAC)

- Flexible deployment options: on-premises, public cloud, or hybrid

- Developer-friendly with integrated tools (e.g., Source-to-Image, OpenShift Pipelines)

Challenges

- Steeper learning curve for teams unfamiliar with Kubernetes concepts

- Higher operational complexity compared to traditional virtualization platforms

- Resource-intensive platform, requiring careful infrastructure planning

- Fewer mature third-party integrations than VMware in legacy enterprise environments

- Limited support for some Windows workloads compared to dedicated hypervisors.

Lanir specializes in founding new tech companies for Enterprise Software: Assemble and nurture a great team, Early stage funding to growth late stage, One design partner to hundreds of enterprise customers, MVP to Enterprise grade product, Low level kernel engineering to AI/ML and BigData, One advisory board to a long list of shareholders and board members of the worlds largest VCs

Tips from the Expert

In my experience, here are tips that can help you better decide (and execute) an OpenShift vs VMware strategy without creating new silos or migration regret:

- Decide based on “operating model fit,” not feature checklists: VMware wins when your org optimizes around infra-runbooks and change control; OpenShift wins when your org optimizes around GitOps, pipelines, and self-service. If you pick the platform that doesn’t match how teams actually work, you’ll pay forever in friction.

- Inventory the “VMware invisible dependencies” before you move anything: Many environments rely on VMware-side behaviors (DRS rules, affinity/anti-affinity, backup proxies, VLAN constructs, templates, golden images, custom scripts). Document these as requirements so you don’t “migrate the VM” but lose the operating ecosystem.

- Use a two-lane policy: “VMs as pets” vs “VMs as cattle”: On OpenShift Virtualization, push as many VMs as possible into declarative, template-driven lifecycle (cattle). Keep only the truly fragile ones in a special lane with tighter change gates and isolated capacity (pets). Mixing them destroys predictability.

- Treat networking parity as the hardest migration workstream (not storage): Most “it worked on vSphere” issues come from L2/L3 assumptions: broadcast reliance, asymmetric routing, firewall zones, load balancer behavior, IP stickiness, and ACL ownership. Do a network parity test plan per app before platform selection is final.

- Benchmark the right thing: tail latency under contention, not peak throughput: ESXi often looks great in clean benchmarks. Kubernetes-based virtualization can look fine too until you add noisy neighbors, overlays, and storage jitter. Measure P95/P99 app latency with realistic contention to avoid picking a platform on misleading numbers.

VMware Strength and Challenges

VMware provides a stable and feature-rich virtualization stack, optimized for traditional data centers and mission-critical applications. Its tools and ecosystem offer enterprise-grade reliability, making it a long-standing choice for infrastructure teams.

Strengths

-

- Proven, stable virtualization platform with wide industry adoption

-

- Tooling for VM lifecycle, resource management, and HA configurations

-

- Ecosystem with integrations for storage, networking, backup, and monitoring

-

- Backward compatibility for legacy OS and applications

-

- Support and documentation for enterprise environments

Challenges

-

- Primarily focused on VMs; container support requires additional components

-

- Less alignment with Kubernetes-native workflows and automation

-

- Licensing and support costs can be high for large-scale deployments

-

- Modernization paths often require additional VMware products or platforms (e.g., Tanzu)

-

- Siloed management between VMs and containers without integration efforts

VMware vs. OpenShift: Which to Choose

The decision between VMware and OpenShift depends on an organization’s current infrastructure, technical goals, and modernization strategy.

When to choose VMWare:

Organizations with a strong investment in virtual machine-based infrastructure, especially those running legacy applications or requiring support for older operating systems, often benefit from VMware. Its ecosystem, reliability, and operational familiarity make it the go-to choice for enterprises prioritizing stability, uptime, and integration with existing data center tools.

When to choose OpenShift:

OpenShift is better suited for organizations pursuing digital transformation or cloud-native strategies. If the goal is to consolidate containerized and VM-based workloads under a Kubernetes-native platform, adopt DevOps practices, or simplify CI/CD pipelines, OpenShift offers a more integrated and forward-looking solution. Its support for both container and VM workloads allows for gradual modernization without forcing immediate migration.

Other considerations

-

- Cost, complexity, and team skill sets also play a role. VMware typically involves higher licensing costs but delivers ease of management for VM-heavy environments. OpenShift may require greater upskilling and infrastructure planning but provides more flexibility and alignment with modern application architectures.

-

- In many cases, a hybrid approach can be viable. Organizations may continue to run critical workloads on VMware while adopting OpenShift for new development, gradually shifting more workloads as maturity with Kubernetes grows.

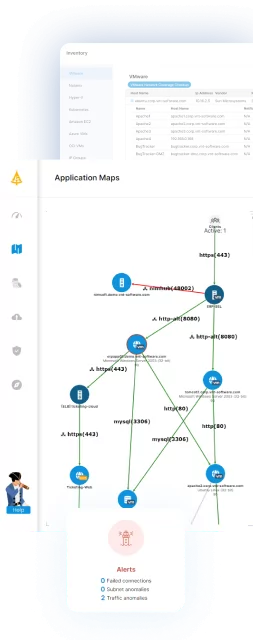

Observability in Virtualized Environments with Faddom Dependency Mapping

As organizations navigate the shift between traditional virtualization and cloud-native platforms, visibility becomes a critical factor in making the right decision and ensuring long-term success. Whether running workloads on VMware, OpenShift, or a combination of both, understanding how applications, virtual machines, and services interact is essential for maintaining stability, avoiding disruption, and supporting modernization efforts.

Faddom helps bridge this gap by providing real-time, agentless application dependency mapping across hybrid environments. By automatically discovering assets and visualizing connections between VMs, containers, and services, it enables teams to manage change with confidence, improve documentation, and reduce security risks. With capabilities like Compass AI, teams can even interact with their infrastructure in real time, gaining instant answers directly from their environment and turning complex systems into actionable insights.